For the last few weeks, it has felt like that the People Who Know Things (or at least Say Things Loudly on the Internet) have come to the conclusion that some kind of AI reckoning is not only inevitable, but happening.

It’s been a lightning-quick shift from “AI is destroying every norm and metric we recognise and it’s time to learn how to be a plumber” to “It’s a bubble, and this is just the dotcom crash of the 90s all over again.”

Obviously this is a clunky oversimplification, and don’t get me wrong, the destruction of every metric and norm we recognise is still merrily on its way, but it does feel like the recent censure of Anthropic and its Large Language Model (LLM), Claude, by the United States Department of War has finally and properly kicked open the termite mound.

Let me recap it all very briefly.

The AI arms race

There are basically five (or six, depending on how seriously you take Meta’s Llama) LLMs that are currently fighting it out for our attention, money and daily use: OpenAI’s ChatGPT, Google’s Gemini, Anthropic’s Claude, the Chinese DeepSeek and… ugh, X’s Grok.

Several of these products have been rather central to the US military’s success in kidnapping Venezuelan presidents and launching missile strikes at places it doesn’t like. Claude in particular.

Recently however, the Pentagon has demanded the removal of internal “guard rails” so that the military can use these LLMs in a more unfettered manner.

OpenAI and Microsoft have said yes. Anthropic has said no.

Subsequently Anthropic has been hit with the full force of a Donald Trump tantrum – essentially removing them from all levels of the American government supply chain. This is bad for Anthropic’s business, but very good for claiming a moral high ground in the fight for the widest possible public adoption.

The immediate effect has been a sharp spike in the take-up of Claude and the (possibly temporary) abandonment of ChatGPT.

The new consumer morality test

Given how many people in my own social circles are earnestly talking about why they are dumping ChatGPT, perhaps we can view this as the pivotal moment that’s shifted AI’s rather backstage infiltration of society, to a more public display of the internal dynamics and morality of the companies we’re all paying to accelerate our own obsolescence.

It’s also forcing normies like you and me to start reframing this stuff as a profoundly existential declaration of our own personal morality in an arena where we have virtually no influence.

Your choice of service, whether it be ChatGPT, Gemini, Claude or… ugh, Grok (note, no one is yet suggesting divesting from using LLMs altogether) has taken on the aura of a flag-waving declaration of alignment, the hill you are willing to die on.

But the thing that gets me is that I can’t escape the feeling that this basically just plastic straws all over again.

The final straw

You see, when that video of the turtle with a plastic straw stuck in its nose went viral, it became an instant line in the sand for personal ethics and responsibility. Using a plastic straw became the equivalent of wading out into the sea and shunting it up the nose of the nearest turtle with your own bare hands.

Being “anti-plastic straw” was the easiest way to broadcast that you were against the wanton destruction of nature. In response, corporations all fell over themselves to dump plastic straws – while largely keeping the rest of their behaviour intact and gratefully doing a Homer Simpson and quietly disappearing into the nearest hedge – as we all blamed each other for exactly how much of the North Pacific Gyre we’d contributed to by not separating paper from plastic in our dustbins.

The deep frustration I have with that responsibility/behaviour loop, is that it made millions of regular people feel personally responsible for a supply chain they had no role in designing. Ordinary humans were handed the guilt about something that was conceived of and created completely upstream from them.

I resent that guilt, while also despising my own willing ignorance.

The shame always trickles down

The greatest trick that the deception of trickle-down economics ever pulled is that it’s the shame and consequence that trickles down, not the money.

And so we are back again at the coalface of personal practice demonstrating that you’re aligning yourself, if not with the “good guys”, then at least “the least obviously and despicably evil”. And as it stands the easiest way to assign moral guilt is to shame the last person who used ChatGPT to turn their cat into a ripoff Studio Ghibli cartoon into using a different LLM to do it instead. Modern moral debates always focus on the consumer. Even when the system has already been decided by capital.

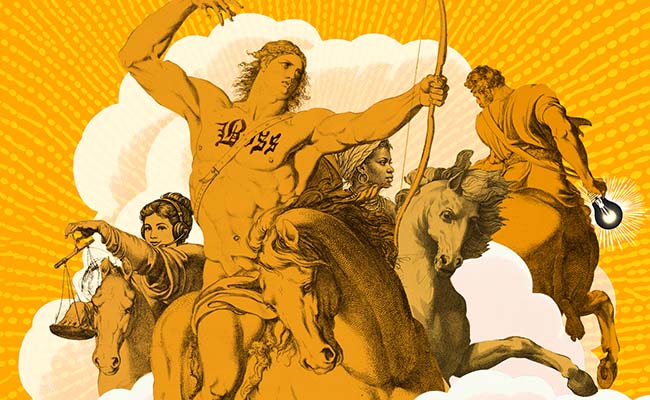

Feeding the AI lions

Because here’s the thing: OpenAI, ChatGPT’s parent company, has just raised $110bn in its most recent round of funding. So, whatever the dip in their user base, they have the time and the capital to turn public opinion back in their favour with every tool at their considerable disposal. Having $110bn in the kitty creates a certain level of strategic patience.

In the meantime, the shift in the debate from whether the technology should even exist (that train left the station actual decades ago) has become more a nervous conversation about which of the hungry lions that we’re paying to cheat on our homework we’d prefer to get eaten by. That shouldn’t be mistaken for actual power. We can no longer kill behaviour; we can sort of only hope to steer it. If clinging on for dear life counts as “steering”.

So, yes. Take your money and attention and choose which lion you’re going to feed it with. But never forget that they will all eat us in the end.

Love Jono’s work? Read more here.

Top image: Rawpixel/Currency collage.

Sign up to Currency’s weekly newsletters to receive your own bulletin of weekday news and weekend treats. Register here.